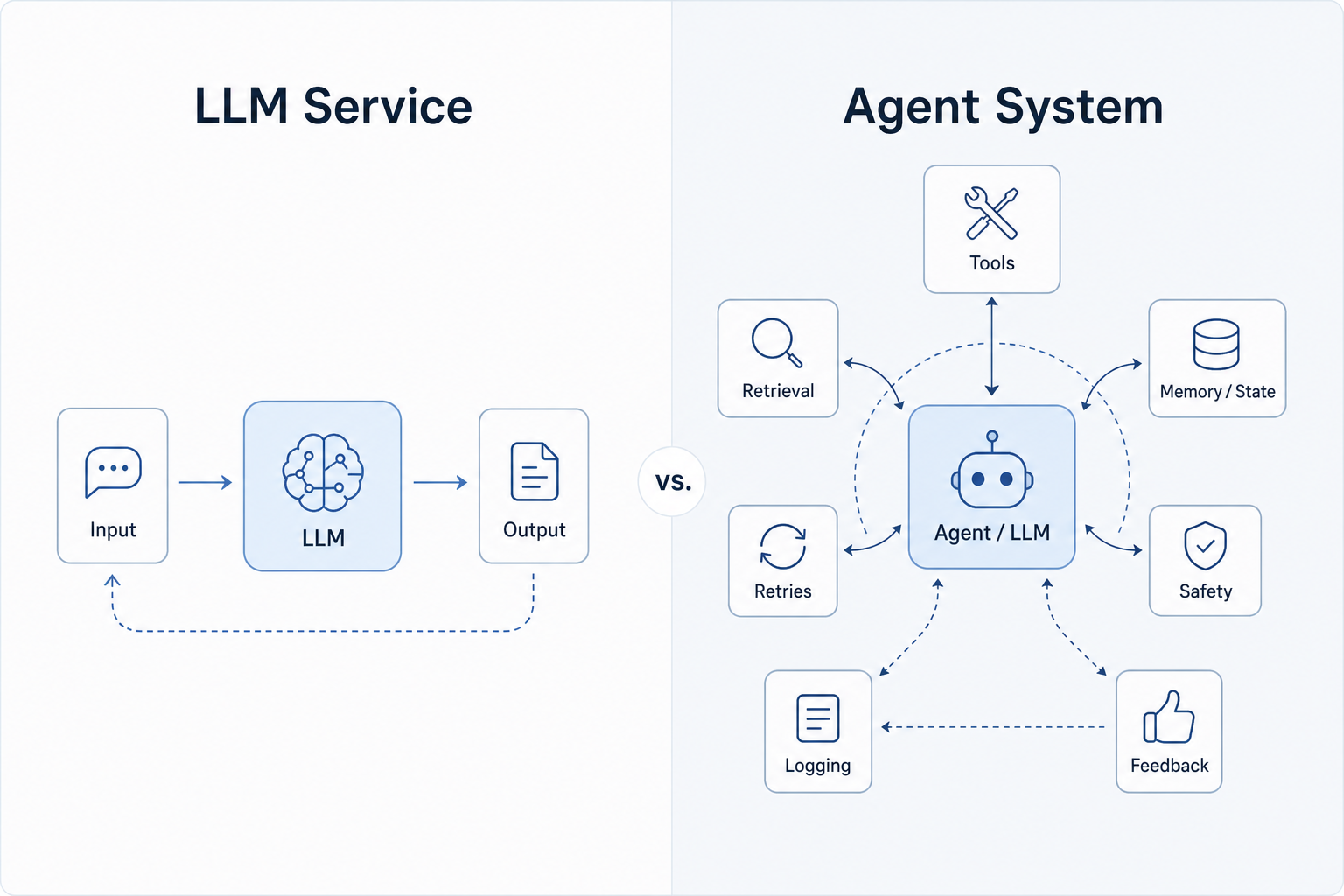

IBM Technology’s The 7 Skills You Need to Build AI Agents makes a point that feels increasingly true: if an agent can act in the real world, then prompt writing is only the starting point.

The more useful framing is this: prompt engineering is the recipe, but agent engineering is the kitchen.

A production-grade agent needs structure, contracts, failure handling, traceability, and a clear product experience. In other words, it needs engineering discipline.

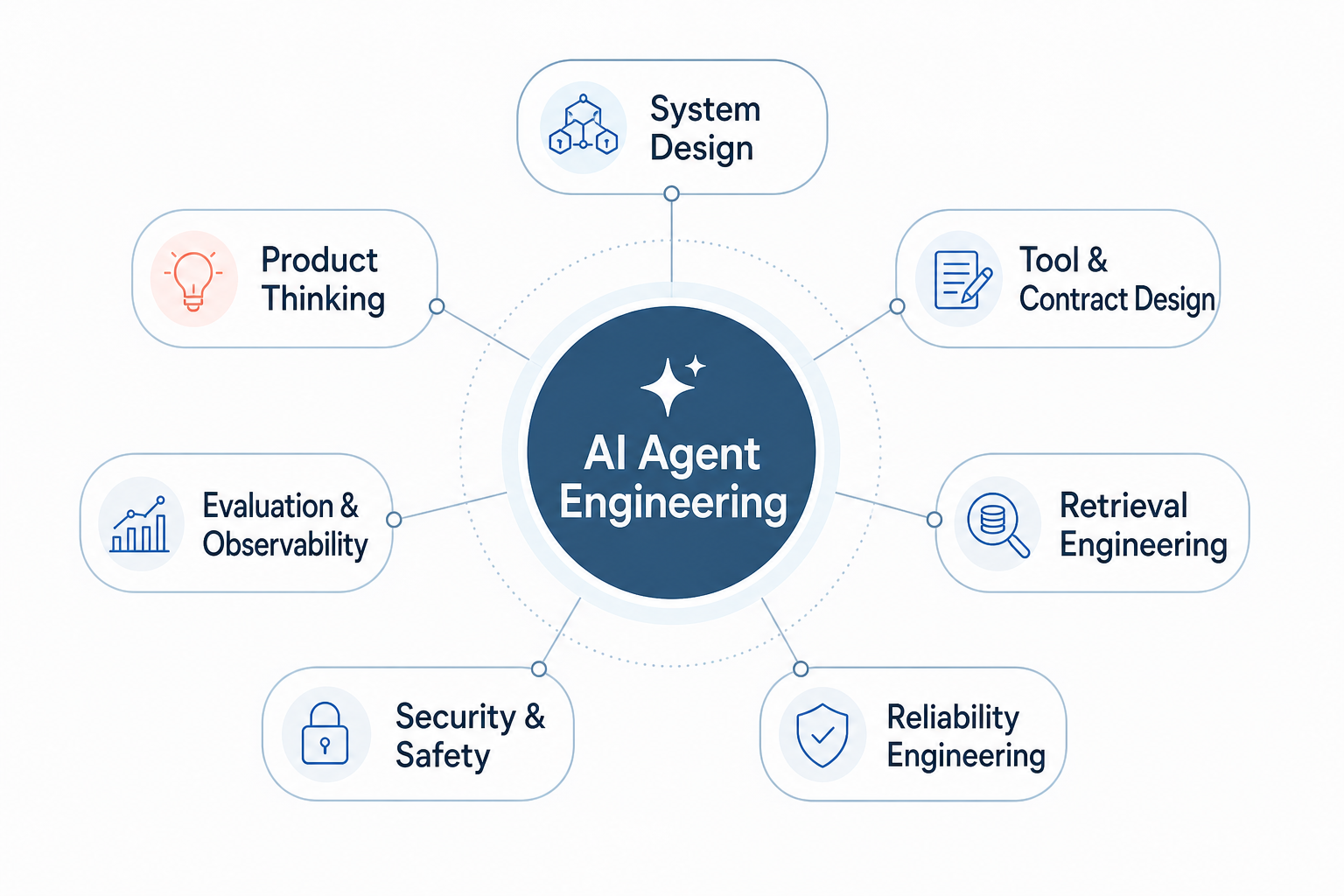

The 7 skills

For engineers who are new to agentic systems, I’d turn the seven skills into these practical rules:

1) System design

- Start with one control loop: state in, tool call out, result back, state updated.

- Keep planning, execution, and persistence separate so failures are easy to trace.

2) Tool and contract design

- Treat tools like strict APIs. OpenAPI exists for exact request/response contracts, not loose suggestions.

- Give the model the smallest safe tool set; validate every parameter before execution.

3) Retrieval engineering

- Retrieval quality sets the ceiling for the agent. Use chunking, metadata, and reranking rather than embeddings alone.

- Check whether the right evidence was retrieved before tuning prompts.

4) Reliability engineering

- Expect timeouts, rate limits, and duplicate calls. Make actions idempotent and add bounded retries.

- Use SLOs and error budgets to decide when to degrade or stop.

5) Security and safety

- Assume prompt injection and unsafe output handling. Keep untrusted text separate from tool instructions.

- Use least privilege, allow-lists, and human approval for high-impact actions.

6) Evaluation and observability

- Log prompts, retrieved context, tool calls, and final outcomes. OpenTelemetry-style traces help connect the whole flow.

- Build offline evals early, then compare them with real user traces and failure cases.

7) Product thinking

- Define success criteria before shipping. Anthropic’s prompt-engineering docs explicitly recommend clear success criteria and empirical tests.

- Add clarification, escalation, and graceful fallback paths so users can recover when the agent is uncertain.

What I liked most is that the video refuses to romanticize agents. Real systems need boundaries. They need retries, fallbacks, logs, and a human-centered experience.

That also means a useful debugging instinct: when an agent fails, trace backward before you rewrite the prompt. Was the right document retrieved? Was the tool schema clear? Did the system fail before the model even had a chance to help?

What to study next

If you want to go deeper, these are good companion resources for each skill area:

1) System design

2) Tool and contract design

3) Retrieval engineering

4) Reliability engineering

5) Security and safety

- OWASP Top 10 for Large Language Model Applications

- OpenAPI Specification for strict request/response contracts

6) Evaluation and observability

7) Product thinking

My takeaway

The title “prompt engineer” still describes a useful entry point, but it no longer describes the full job.

If we want agents that people can trust, we need to think like engineers, product builders, and system designers at the same time.

Source

- YouTube: https://youtu.be/mtiOK2QG9Q0?si=ITxYMB-1FnRJpcGp

- Video title: The 7 Skills You Need to Build AI Agents

- Channel: IBM Technology